Screens

Four surfaces, one connected system

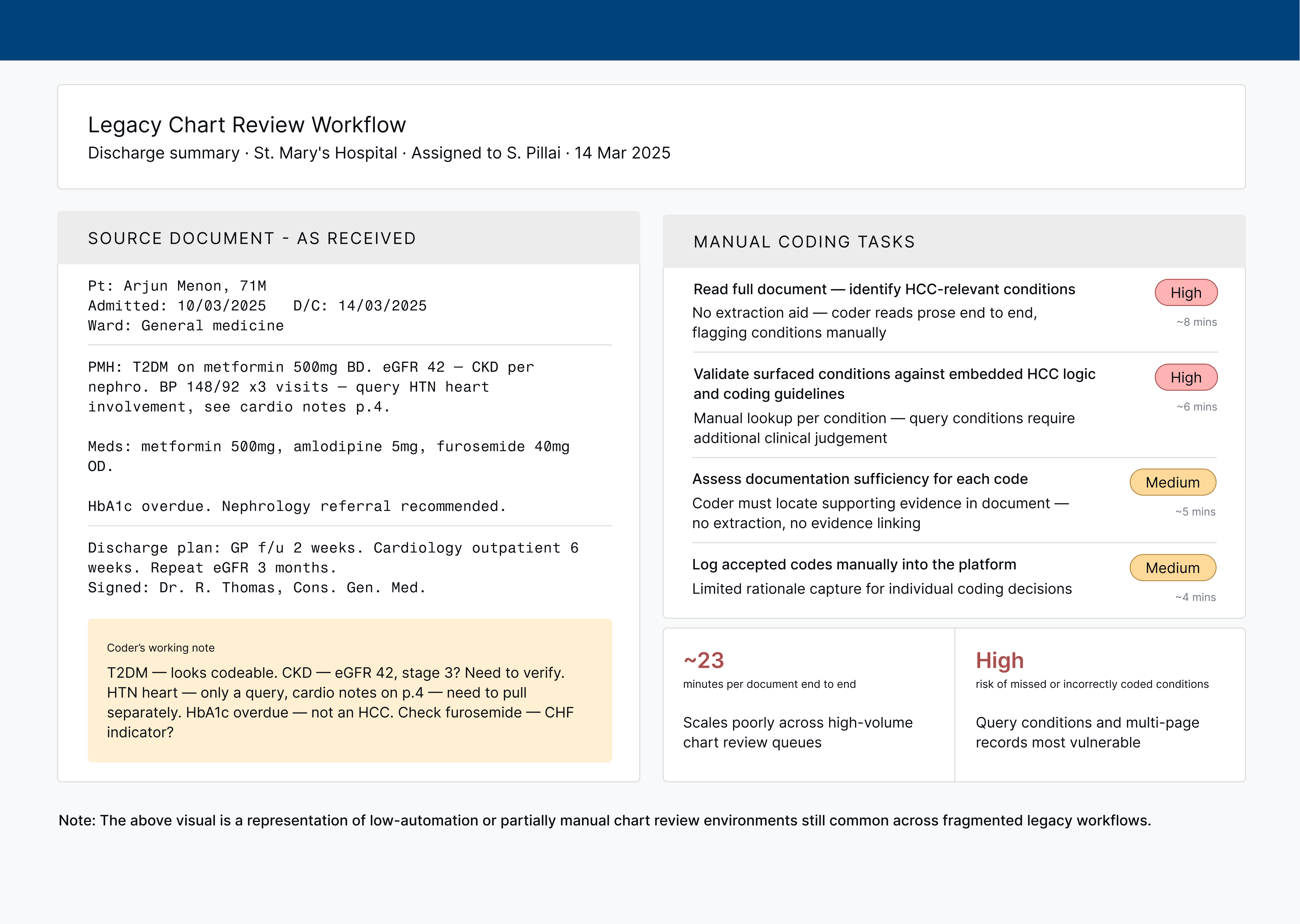

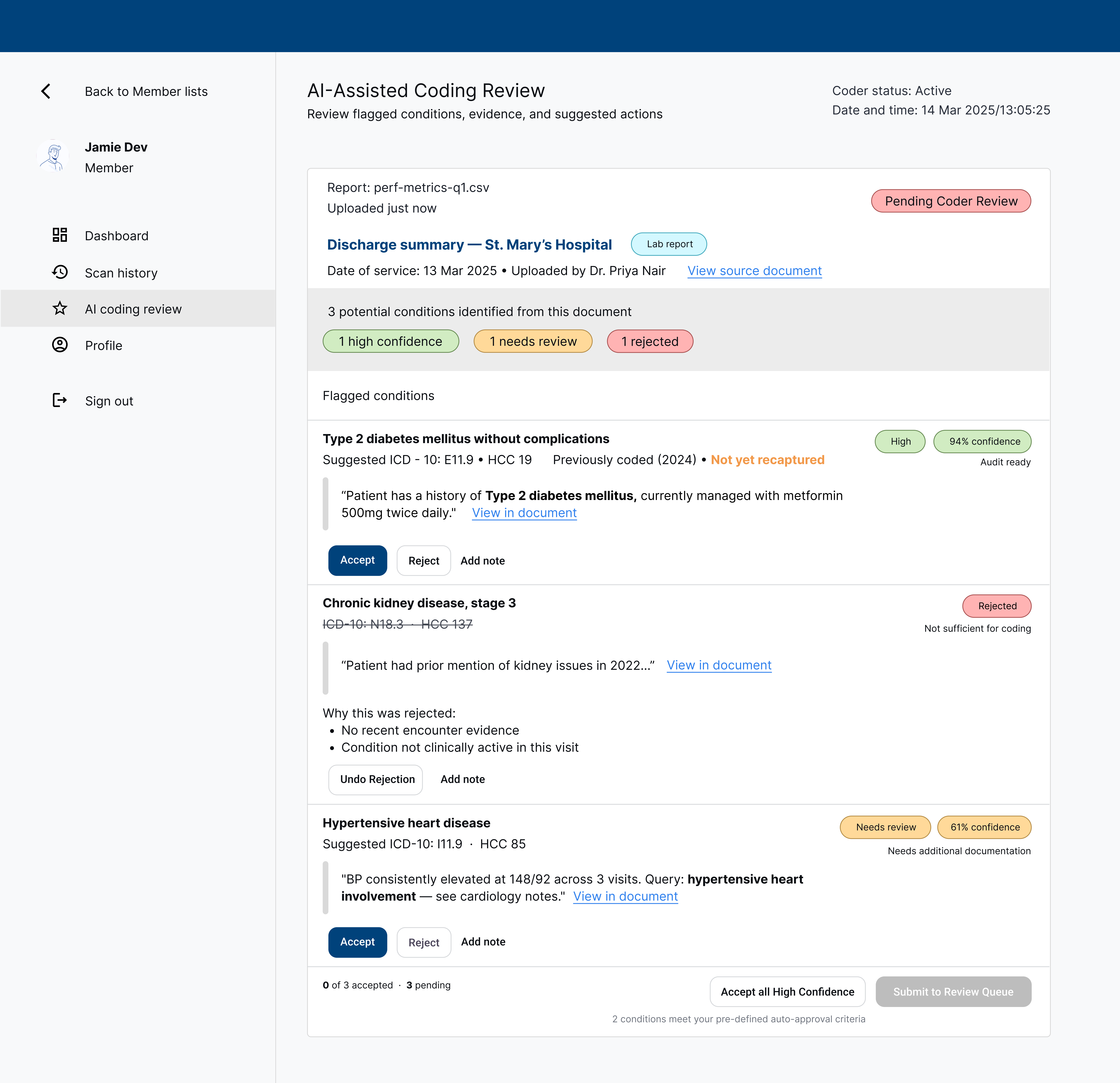

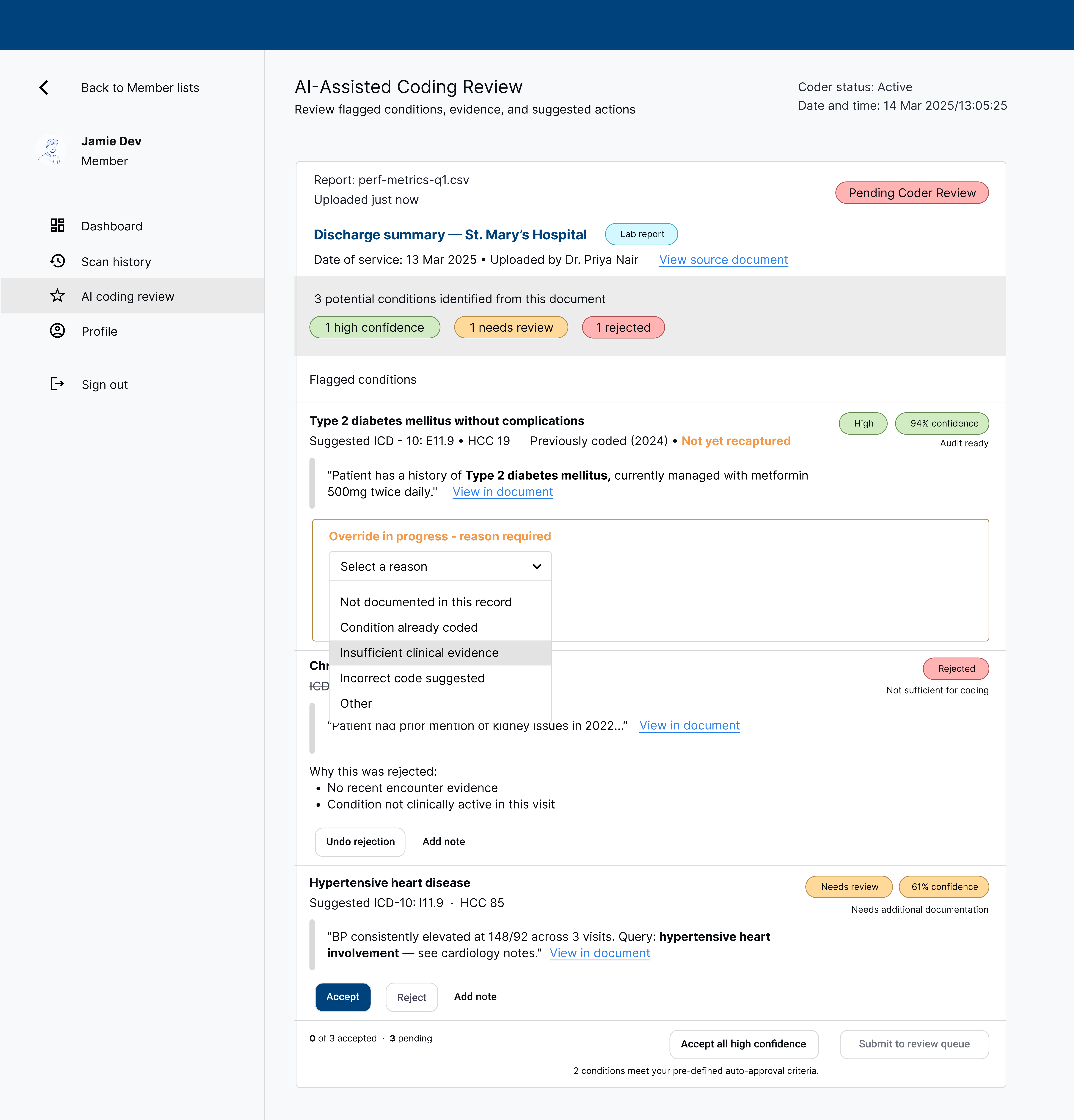

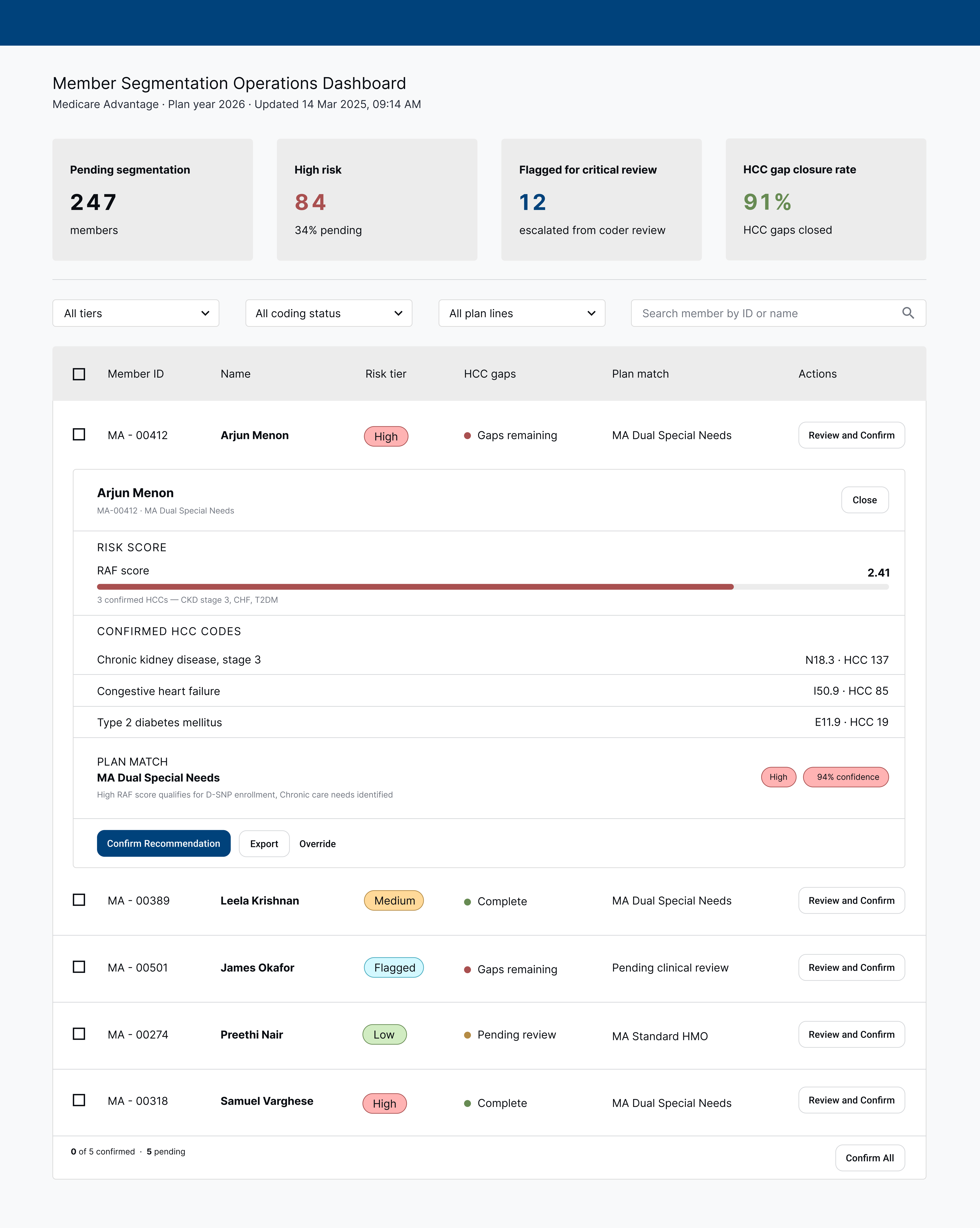

Each screen corresponds to a distinct moment in the pipeline — from document upload to clinical encounter. The design decisions in each surface reflect who's using it, what they need to trust, and what action the system is asking them to take.

Each scanned document produces one card. Three conditions are flagged from a discharge summary: Type 2 diabetes mellitus at high confidence (94%), CKD stage 3 rejected with a reason ("not sufficient for coding"), and hypertensive heart disease at 61% needing review. Each flag shows the exact evidence snippet from the source text and an Accept / Reject / Add note action set. Coders can bulk-accept all high-confidence flags at the bottom without reviewing each individually.

Override expands inline — no modal or navigation away from the queue. A required reason dropdown surfaces five options: not documented in this record, condition already coded, insufficient clinical evidence, incorrect code suggested, and other. Selecting "Incorrect code suggested" would reveal a code substitution field, feeding a correction signal back to the AI model. Friction is calibrated to the flag's confidence — high-confidence overrides require a reason; lower-confidence ones do not.

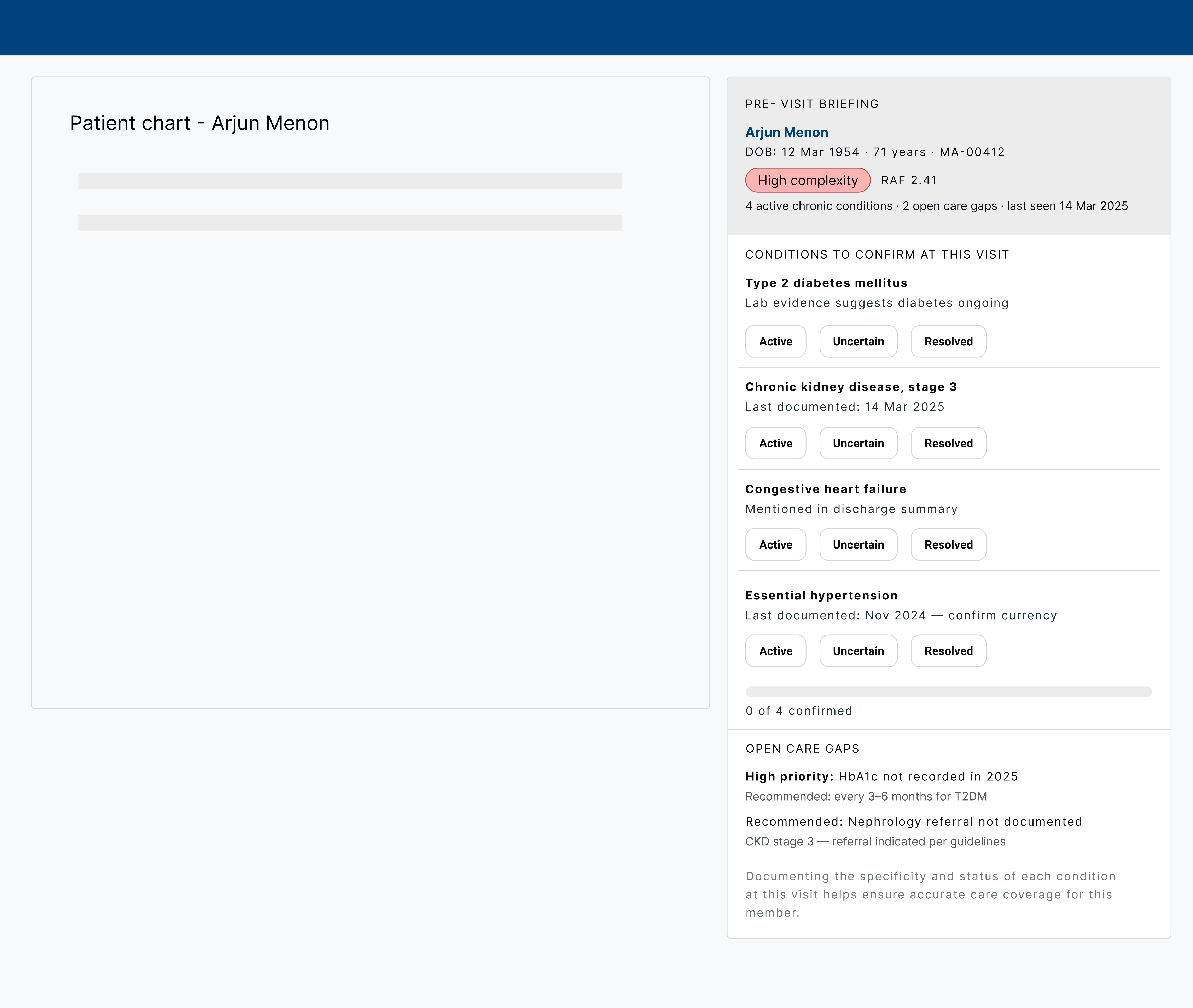

Shared by risk adjustment and enrollment ops. Four KPI tiles surface the pipeline at a glance: 247 members pending segmentation, 84 high risk, 12 flagged for critical review, and a 91% HCC gap closure rate. Each member row shows risk tier, gap status, and plan match. Expanding a row (shown for Arjun Menon) reveals the RAF score bar, confirmed HCC codes, and a plan match recommendation with confidence score — all actionable with Confirm, Export, or Override.

A sidebar panel embedded in the existing EHR alongside the patient chart. No ICD-10 codes visible — condition names only, keeping the frame clinical rather than billing-oriented. Four conditions are listed with Active / Uncertain / Resolved toggles for the provider to confirm currency at the visit. Below, two open care gaps are framed as clinical recommendations: a high-priority HbA1c flag and a nephrology referral. The CDI nudge appears last, after clinical context has established trust.